Vitalik Buterin: The Final Battle of Ethereum

Author: Vitalik Buterin

Translation: Block unicorn

Special thanks to a large group of people from Optimism and Flashbots for their discussions and ideas on this article, as well as feedback and reviews from Karl Floersch, Phil Daian, and Alex Obadia.

Consider a general "big blockchain"—very high block frequency, very large block size, thousands of transactions per second, but also highly centralized: because the blocks are too large, only dozens or hundreds of nodes can run a fully participating node, create blocks, or validate the existing chain. At least by my standards, how can such a chain be made acceptable, trustless, and censorship-resistant?

Here is a reasonable roadmap:

Add a second-layer collateral with low resource requirements for distributed block validation. Transactions in a block are divided into 100 buckets, each with a Merkle or Verkle tree state root behind it. Each secondary staker is randomly assigned to one of the buckets. A block is only accepted if at least 2/3 of the validators assigned to each bucket sign it.

Introduce fraud proofs or ZK-SNARKs to allow users to directly (and cheaply) check block validity. ZK-SNARKs can directly encrypt proofs of block validity; fraud proofs are a simpler scheme where if a block has an invalid bucket, anyone can broadcast a fraud proof for that bucket. This provides another layer of security on top of the randomly assigned validators.

Introduce data availability sampling to allow users to check block availability. By using DAS checks, light clients can verify whether blocks have been published by downloading only a few randomly selected blocks.

Add secondary transaction channels to prevent censorship. One way is to allow secondary stakers to submit a list of transactions that the next main block must include.

What do we get after doing all this? We get a chain where block production is still centralized, but block validation is trustless and highly decentralized, and specialized anti-censorship magic can prevent block producers from censoring. Aesthetically, this is somewhat ugly, but it does provide the fundamental guarantee we are looking for: even if every major stakeholder (block producer) intends to attack or censor, the worst they can do is go completely offline, at which point the chain stops accepting transactions until the community pools their resources and establishes an honest major stakeholder node.

Now, consider a possible long-term future of Rollups…

Imagine a specific Rollup—whether it’s Arbitrum, Optimism, Zksync, or something entirely new—that does an excellent job in designing their node implementation, capable of processing 10,000 transactions per second if provided with sufficiently powerful hardware. The technology to achieve this is well-known in principle; years ago, Dan Larimer and others implemented it: splitting execution into one CPU thread running inexpensive but unparalleled business logic, and many other threads running expensive business logic. But highly parallelized cryptography. Also imagine Ethereum achieving sharding through data availability sampling, with space to store the aggregated chain data across its 64 shards. What would that world look like?

Once again, we find ourselves in a world where block production is centralized, block validation is trustless and highly decentralized, and censorship is still prevented. Aggregated block producers must handle a large volume of transactions, making it a difficult market to enter, but they cannot push invalid blocks. Block availability is guaranteed by the underlying chain, and block validity is guaranteed by the aggregation logic: if it’s a ZK aggregation, it’s ensured by SNARKs, as long as there is one honest participant running a fraud proof node somewhere, optimistic aggregation is secure (they can be subsidized with Gitcoin grants). Moreover, since users can always choose to submit transactions through on-chain secondary inclusion channels, aggregated sorters cannot effectively censor either.

Now, consider another possible long-term future of aggregation…

No single aggregation can successfully align with the majority of Ethereum activity. Instead, they all peak at a few hundred transactions per second. We get a multi-aggregation future for Ethereum—a Cosmos multi-chain vision, but built on a foundational layer that provides data availability and shared security. Users often rely on cross-aggregation bridges to jump between different aggregations without paying high fees on the main chain. What would that world look like?

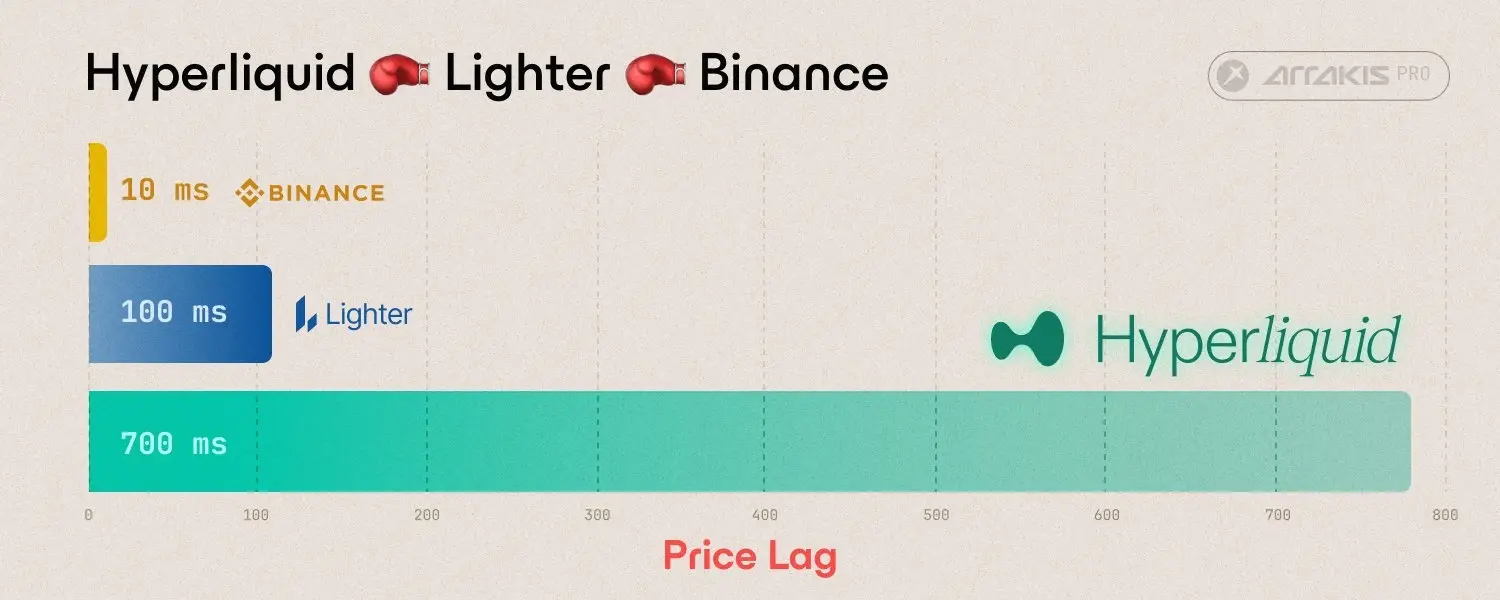

It seems we could have it all: decentralized validation, strong censorship resistance, and even distributed block production, as aggregations are very small and easy to start producing blocks. However, the decentralization of block production may not be sustainable due to the potential for cross-domain MEV. The ability to build the next block simultaneously across multiple domains has many advantages: you can create blocks that exploit arbitrage opportunities that depend on trading in two aggregations, or one aggregation and the main chain, or even more complex combinations.

Cross-domain MEV opportunities discovered by Western Gate

Thus, in a multi-domain world, the same person controlling block production across all domains faces immense pressure. It may not happen, but it is likely to happen, and we must be prepared for that possibility. What can we do about it? So far, the best approach we know is to combine two technologies:

Aggregations implement some mechanism to auction blocks in each time slot, or the Ethereum base layer implements proposer/builder separation (PBS) (or both). This at least ensures that any centralization trend in block production does not lead to a block validation market dominated by a completely captured and centralized elite stake pool.

Aggregations implement anti-censorship bypass channels, and the Ethereum base layer tools PBS anti-censorship techniques. This ensures that if the winner of a potentially highly centralized "pure" block production market attempts to censor transactions, there is a way to bypass the censorship.

So what’s the result? Block production is centralized, block validation is trustless and highly decentralized, and censorship can still be prevented.

Three paths leading to the same destination

So what does this mean?

While there are many ways to build a scalable and secure long-term blockchain ecosystem, they all seem to be heading toward a very similar future. The likelihood that block production will ultimately centralize is high: the network effects of aggregations or cross-domain MEV push us in that direction in their respective ways. However, what we can do is use protocol-level technologies such as committee validation, data availability sampling, and bypass channels to "normalize" this market, ensuring that the winners cannot abuse their power.

What does this mean for block producers? Block production is likely to become a specialized market, with domain expertise likely to span different domains. A good Optimism block producer is 90% also a good Arbitrum block producer, a good Polygon block producer, and even a good Ethereum base layer block producer. If there are many domains, cross-domain arbitrage could also become an important source of income.

What does this mean for Ethereum? First, despite the inherent uncertainties, Ethereum is very well-suited to adapt to this future world. The far-reaching benefit of a roadmap centered around Ethereum aggregations is that it means Ethereum is open to all futures and does not have to take a stance on which one will inevitably win. Would users be very eager to be on one aggregation? Ethereum can follow its existing roadmap to become its base layer, automatically providing the high-capacity domains with secure anti-fraud and anti-censorship "armor." Is making high-capacity domains technically too complex, or do users simply have a strong demand for diversity? Ethereum can also be its base layer—and a very good base layer, as the shared trust root makes transferring assets securely and cheaply between aggregations much easier.

Moreover, Ethereum researchers should seriously consider the degree of decentralization that can realistically be achieved in block production. If cross-domain MEV (even cross-shard MEV from one aggregation occupying multiple shards) makes it unsustainable, then adding complex pipelines to simplify highly decentralized block production may not be worth it.

What does this mean for big blockchains? They have a path to becoming trustless and censorship-resistant, and we will soon find out whether their core developers and communities truly value anti-censorship and decentralization enough to make it happen!

All of this may take years to accomplish. Sharding and data availability sampling are complex technologies to implement. It will take years of improvements and audits before people can confidently store their assets in a ZK-rollup running a full EVM. Cross-domain MEV research is still in its infancy. However, the realistic and bright future of scalable blockchains seems to be becoming increasingly clear.