The Survival of ZKVM: A Detailed Explanation of Factional Struggles

Author: Bryan, IOSG Ventures

Table of Contents

Circuit Implementation of ZKP Proof Systems - Circuit-based VS VM-based

Design Principles of ZKVM

Comparison between STARK-based VMs

Why Risc0 is Exciting

Preface:

The main discussion focus on rollups in the past year of 2022 seems to have concentrated on ZkEVM, but let's not forget that ZkVM is also another means of scaling. Although ZkEVM is not the focus of this article, it is worth reflecting on several dimensions of difference between ZkVM and ZkEVM:

1. Compatibility: While both are scaling solutions, their focuses are different. ZkEVM emphasizes direct compatibility with the existing EVM, while ZkVM aims for complete scalability, optimizing the logic and performance of dapps, where compatibility is not the primary concern. Once the underlying system is well-established, EVM compatibility can also be achieved.

2. Performance: Both have foreseeable performance bottlenecks. The main bottleneck for ZkEVM lies in the compatibility with EVM, which incurs unnecessary costs when encapsulated in a ZK proof system. The bottleneck for ZkVM arises from the introduction of the ISA, leading to more complex final output constraints.

3. Developer Experience: Type II ZkEVM (such as Scroll, Taiko) focuses on compatibility with EVM Bytecode, meaning that EVM code at the Bytecode level and above can generate corresponding zero-knowledge proofs through ZkEVM. For ZkVM, there are two directions: one is to create its own DSL (like Cairo), and the other is to aim for compatibility with existing mature languages like C++/Rust (like Risc0). In the future, we expect native Solidity Ethereum developers to migrate to ZkEVM at no cost, while more powerful applications will run on ZkVM.

Many people should still remember this image; CairoVM's detachment from the ZkEVM factional struggle is fundamentally due to different design philosophies.

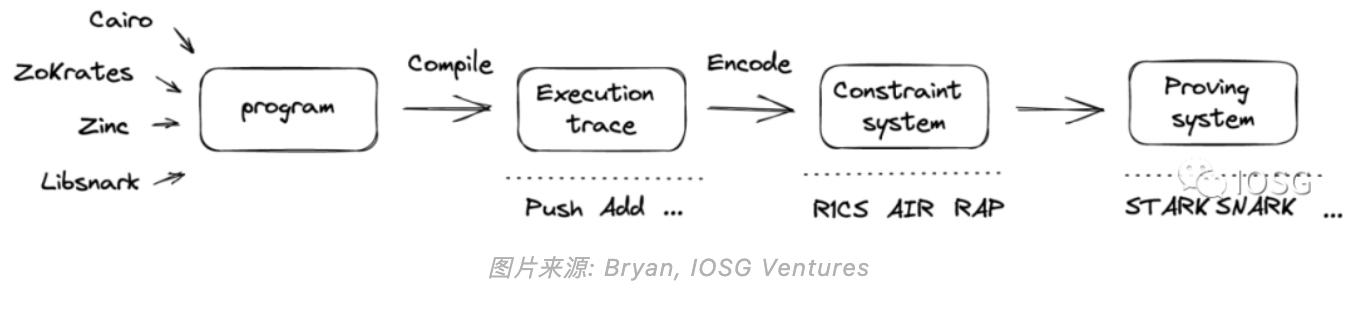

Before discussing ZkVM, we first consider how to implement ZK proof systems in blockchain. Broadly speaking, there are two methods to implement circuits - circuit-based systems and vm-based systems.

First, the function of circuit-based systems is to directly convert programs into constraints and send them to the proving system; vm-based systems execute programs through an instruction set (ISA), generating an execution trace in the process. This execution trace is then mapped into constraints and sent to the proving system.

In a circuit-based system, the computation of the program is constrained by each machine executing the program. In a vm-based system, the ISA is embedded in the circuit generator, producing constraints for the program, while the circuit generator has limitations such as instruction set, execution cycles, memory, etc. The virtual machine provides generality, meaning that any machine can run a program as long as the execution conditions fall within the aforementioned limitations.

In a virtual machine, a zkp program roughly goes through the following process:

Advantages and Disadvantages:

From a developer's perspective, developing in a circuit-based system usually requires a deep understanding of the cost of each constraint. However, for writing virtual machine programs, the circuit is static, and developers need to focus more on the instructions.

From a verifier's perspective, assuming the same pure SNARK is used as the backend, there is a significant difference in circuit generality between circuit-based systems and virtual machines. Circuit systems generate different circuits for each program, while virtual machines generate the same circuit for different programs. This means that in a rollup, the circuit system needs to deploy multiple verifier contracts on L1.

From an application perspective, virtual machines make the logic of applications more complex by embedding the memory model into the design, while the purpose of using circuit systems is to enhance program performance.

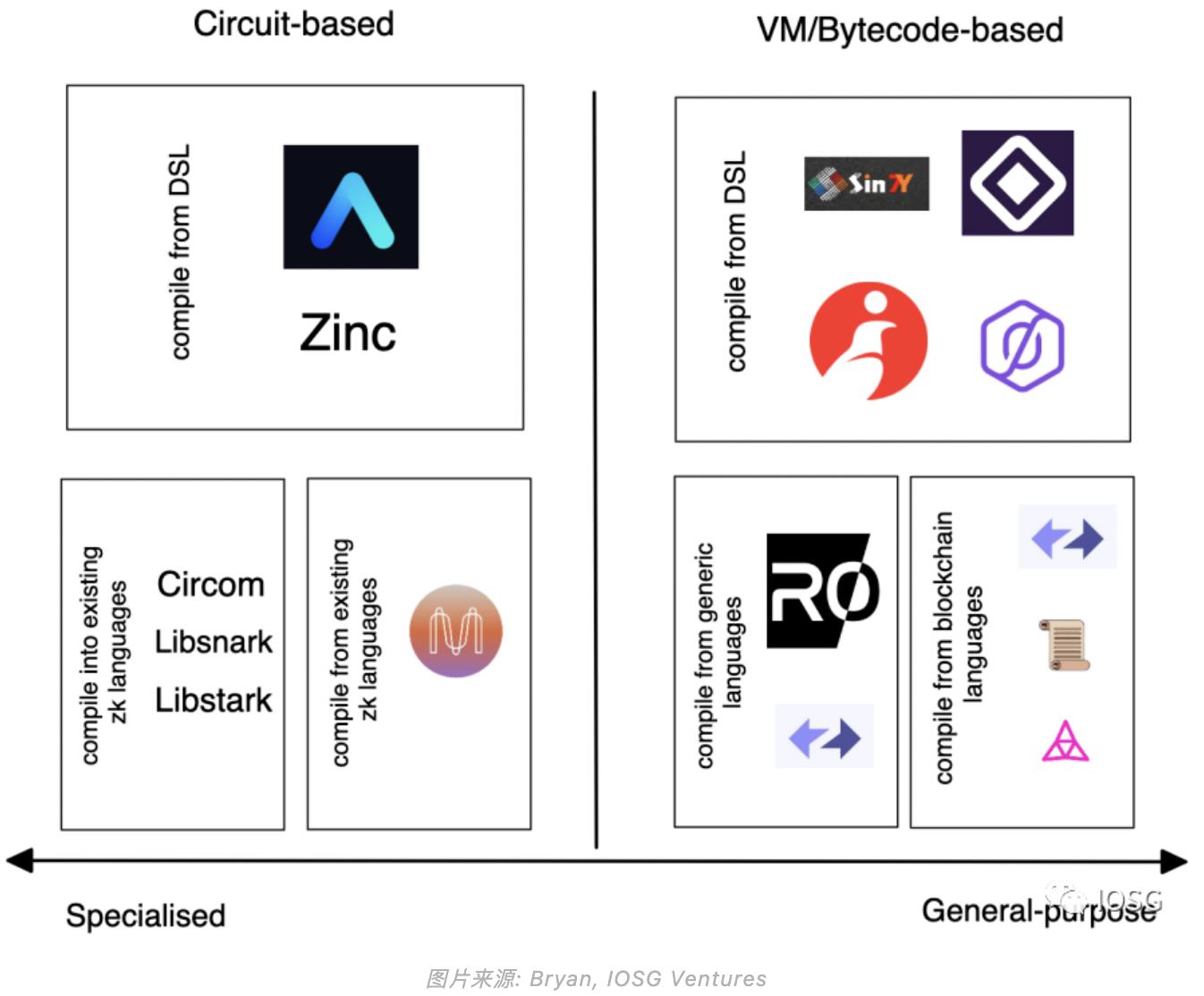

From the perspective of system complexity, virtual machines incorporate more complexity into the system, such as memory models, communication between host and guest, whereas circuit systems are more straightforward. Here is a preview of different projects based on circuits and virtual machines currently in L1/L2:

Design Principles of Virtual Machines

In virtual machines, there are two key design principles. First, ensure that programs are executed correctly. In other words, the output (i.e., constraints) should correctly match the input (i.e., program). This is generally accomplished through the ISA instruction set. Second, ensure that the compiler works correctly when converting high-level languages into appropriate constraint formats. 1. ISA Instruction Set

Defines how the circuit generator operates. Its main responsibility is to correctly map instructions to constraints, which are then sent to the proving system. The zk systems use RISC (Reduced Instruction Set). There are two options for ISA:

The first is to build a custom ISA, as seen in the design of Cairo. Generally, there are four types of constraint logic.

The basic design focus of a custom ISA is to ensure that constraints are minimized as much as possible, allowing for fast execution and verification of the program.

The second is to utilize an existing ISA, which is adopted in the design of Risc0. In addition to aiming for concise execution time, existing ISAs (like Risc-V) also provide additional benefits, such as being friendly to front-end languages and back-end hardware. One (yet-to-be-solved) issue is whether existing ISAs will lag in verification time (as verification time is not the primary design pursuit of Risc-V).

2. Compiler

Broadly speaking, the compiler gradually translates programming languages into machine code. In the context of ZK, it refers to using high-level languages like C, C++, Rust, etc., to compile into low-level code representations of constraint systems (R1CS, QAP, AIR, etc.). There are two methods:

Design a compiler based on existing zk circuit representations—such as in ZK, where circuit representations start from directly callable libraries like Bellman and low-level languages like Circom. To aggregate different representations, compilers like Zokrates (which is also a DSL) aim to provide an abstraction layer that can compile into any lower-level representation.

Build upon existing compiler infrastructure. The basic logic is to utilize an intermediate representation (IR) targeted at multiple front-ends and back-ends.

The Risc0 compiler is based on multi-level intermediate representation (MLIR), which can generate multiple IRs (similar to LLVM). Different IRs provide flexibility to developers, as each IR has its design focus, with some optimizations specifically targeting hardware, allowing developers to choose according to their preferences. Similar ideas can also be seen in vnTinyRAM and TinyRAM using GCC. ZkSync is another example that utilizes compiler infrastructure.

Additionally, you can see some compiler infrastructures targeted at zk, such as CirC, which also borrows some design concepts from LLVM. Aside from the two most critical design steps mentioned above, there are some other considerations:

1. Trade-off between system security and verifier cost

The higher the number of bits used by the system (i.e., the higher the security), the higher the verification cost. Security is reflected in the key generator (for example, representing elliptic curves in SNARK).

2. Compatibility with front-end and back-end

Compatibility depends on the effectiveness of the intermediate representation (IR) for circuits. The IR needs to strike a balance between correctness (whether the program's output matches the input + whether the output conforms to the proving system) and flexibility (supporting multiple front-ends and back-ends). If the IR was initially designed to solve low-degree constraint systems like R1CS, it would be challenging to achieve compatibility with other higher-degree constraint systems like AIR.

3. Hand-crafted circuits for efficiency improvement

The downside of using general-purpose models is that for some simple operations that do not require complex instructions, their efficiency is relatively low. To summarize some previous theories,

Before the Pinocchio Protocol: Achieved verifiable computation, but verification time was very slow.

Pinocchio Protocol: Provides theoretical feasibility in terms of verifiability and verification success rate (i.e., verification time is shorter than execution time), based on circuit systems.

TinyRAM Protocol: Compared to the Pinocchio protocol, TinyRAM is more like a virtual machine, introducing ISA, thus overcoming some limitations such as memory access (RAM), control flow, etc.

vnTinyRAM Protocol: Makes key generation not dependent on each program, providing additional generality. Extends the circuit generator to handle larger programs.

The above models all use SNARK as their backend proof system, but particularly when dealing with virtual machines, STARK and Plonk seem to be more suitable backends, fundamentally due to their constraint systems being more suitable for implementing CPU-like logic.

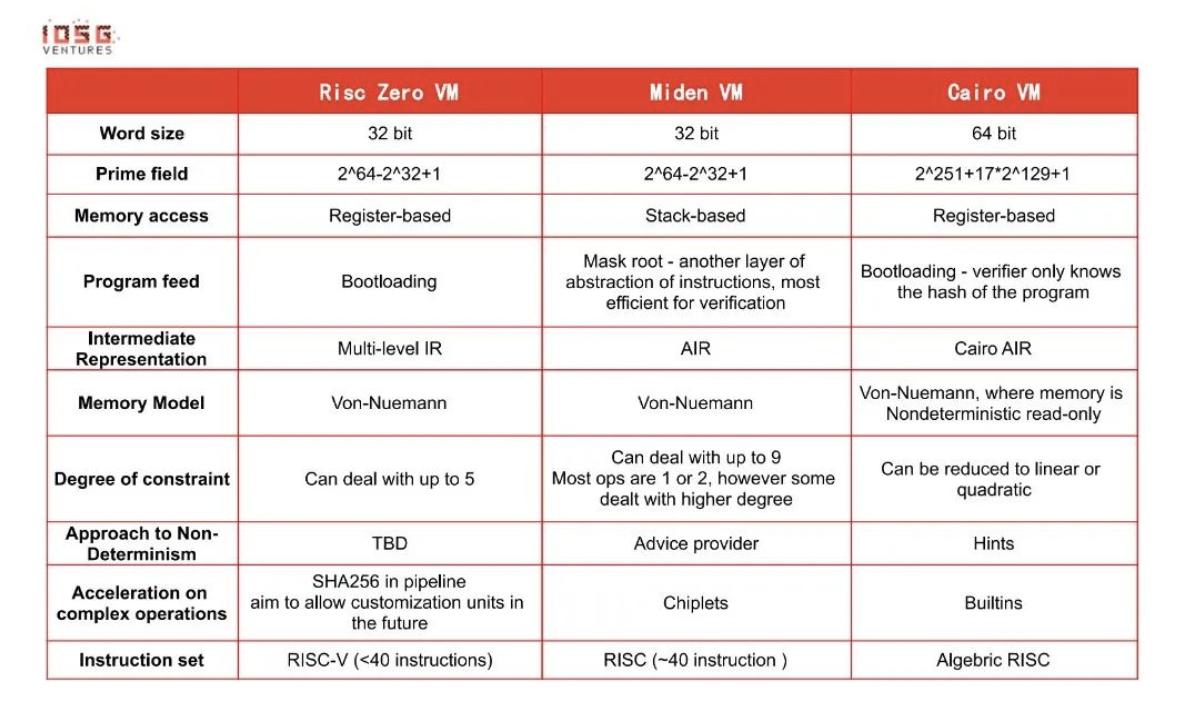

Next, this article will introduce three STARK-based virtual machines - Risc0, MidenVM, CairoVM. In short, aside from all using STARK as the proof system, they each have some differences:

- - Risc0 utilizes Risc-V to achieve instruction set conciseness. R0 compiles in MLIR, a variant of LLVM-IR, aimed at supporting various existing general-purpose programming languages like Rust and C++. Risc-V also has additional benefits, such as being more hardware-friendly.

- - Miden aims for compatibility with the Ethereum Virtual Machine (EVM), essentially being a rollup of EVM. Miden currently has its own programming language but is also committed to supporting Move in the future.

- - Cairo VM is developed by Starkware. The STARK proof system used by these three systems was invented by Eli Ben-Sasson, who is currently the president of Starkware.

Let’s delve deeper into their differences:

* How to read the table above? Some notes…

●Word size - Since the constraint systems these virtual machines are based on are AIR, their functionality is similar to CPU architectures. Therefore, choosing CPU word sizes (32/64 bits) is more appropriate.

●Memory access - Risc0 uses registers primarily because the Risc-V instruction set is register-based. Miden mainly uses a stack to store data, as AIR functions similarly to a stack. CairoVM does not use general-purpose registers because the cost of memory access in the Cairo model is relatively low.

●Program feed - Different methods come with trade-offs. For example, the mast root method requires decoding when processing instructions, resulting in higher prover costs for programs with many execution steps. The Bootloading method attempts to balance prover costs and verifier costs while maintaining privacy.

●Non-determinism - Non-determinism is an important attribute of NP-complete problems. Utilizing non-determinism helps quickly verify past executions. Conversely, it adds more constraints, leading to some compromises in verification.

●Acceleration on complex operations - Some computations run slowly on CPUs. For example, bit operations like XOR and AND, hash programs like ECDSA, and range checks are mostly operations native to blockchain/cryptography but not native to CPUs (except for bit operations). Implementing these operations directly through DSL can easily lead to exhausting proof cycles.

●Permutation/multiset - Heavily used in most zkVMs for two purposes—1. Reducing verifier costs by minimizing the storage of complete execution traces 2. Proving that the verifier knows the complete execution trace. At the end of the article, the author wants to discuss the current development of Risc0 and why it excites me.

Current Development of R0:

a. The self-developed "Zirgen" compiler infrastructure is under development. It will be interesting to compare Zirgen's performance with some existing zk-specific compilers.

b. Some interesting innovations, such as field extension, can achieve more robust security parameters and operate on larger integers.

c. Witnessing the challenges seen in the integration between ZK hardware and ZK software companies, Risc0 employs a hardware abstraction layer for better development in hardware.

d. Still a work-in-progress! Ongoing development!

- Supports hand-crafted circuits and multiple hashing algorithms. Currently, a dedicated SHA256 circuit has been implemented, but it does not yet meet all needs. The author believes that the specific choice of which type of circuit to optimize depends on the use cases provided by Risc0. SHA256 is a very good starting point. On the other hand, the positioning of ZKVM offers flexibility, for example, they don’t have to care about Keccak as long as they don’t want to :)

- Recursion: This is a big topic, and the author tends not to delve deeply into it in this report. It is important to note that as Risc0 tends to support more complex use cases/programs, the need for recursion becomes more urgent. To further support recursion, they are currently exploring a hardware-side GPU acceleration solution.

- Handling non-determinism: This is an attribute that ZKVM must deal with, while traditional virtual machines do not have this issue. Non-determinism can help virtual machines execute faster. MLIR is relatively better at handling traditional virtual machine issues, and how Risc0 embeds non-determinism into ZKVM system design is worth looking forward to.

WHAT EXCITES ME:

a. Simple and Verifiable!

In distributed systems, PoW requires a high level of redundancy because people do not trust each other, necessitating the repeated execution of the same computation to reach consensus. By utilizing zero-knowledge proofs, achieving state should be as easy as agreeing that 1+1=2.

b. More Practical Use Cases:

Beyond the most direct scaling, more interesting use cases will become feasible, such as zero-knowledge machine learning, data analysis, etc. Compared to specific ZK languages like Cairo, the capabilities of Rust/C++ are more universal and powerful, with more web2 use cases running on Risc0 VM.

c. More Inclusive/Mature Developer Community:

Developers interested in STARK and blockchain no longer need to relearn DSL; they can use Rust/C++.