Alaya AI: How to Improve AI Data Annotation Efficiency through Gamified Design in Web3?

Author: Alaya AI

Project Overview

Alaya AI is an innovative AI data annotation platform designed to leverage blockchain technology, zero-knowledge proofs, shared economy models, and advanced AI data labeling and organization techniques to promote the development of the AI industry. The project allows users to earn rewards while contributing data and utilizes blockchain and ZK technology to protect user privacy and data ownership.

Alaya AI collects data through user responses to questions and uses an integrated AI system to assess the accuracy of user contributions, thereby providing corresponding Token rewards. As users' NFT levels increase, the difficulty of questions will gradually rise, covering a wide range of topics from general knowledge to specialized fields. Ultimately, Alaya AI will standardize the collected data for recognition and training by various AI models.

Market Analysis

Traditional economics considers labor, land, and capital as the main production factors, but the logic may have quietly changed in the era of artificial intelligence, with algorithms, data, and computing power becoming the three essential elements of production. In the current exploration of large language models, the algorithm side is still making subtle adjustments based on Transformers, while computing power continues to stack up, and high-quality data is the key indicator that constrains breakthroughs in models and algorithms. As companies begin to train their own large AI models, the demand for data is skyrocketing.

In the traditional world, the data annotation business has already supported a market worth hundreds of billions, with well-known companies including Scale AI, Appen, Hai Tian Rui Sheng, and Yun Ce Data. However, traditional data annotation businesses struggle to effectively reach global users, exacerbating inequalities between different regions. Reports indicate that outsourced data annotators used by OpenAI in Kenya earn less than $1.50 per hour, annotating about 200,000 words daily.

In Web3, utilizing blockchain technology, data ownership can belong to individual data providers. The decentralized data storage and trading mechanism allows individuals to better control their data assets, trading and authorizing as needed to receive corresponding incentives and rewards. This model better protects the rights and interests of data annotators. Based on the immutable and traceable characteristics of blockchain, Web3 data services can provide higher transparency and reliability. Every data transaction, annotation task assignment, and completion status will be recorded on-chain, allowing anyone to verify, reducing the possibility of fraud and malice. Data users can trust the on-chain data without needing additional trust endorsements.

Product Design

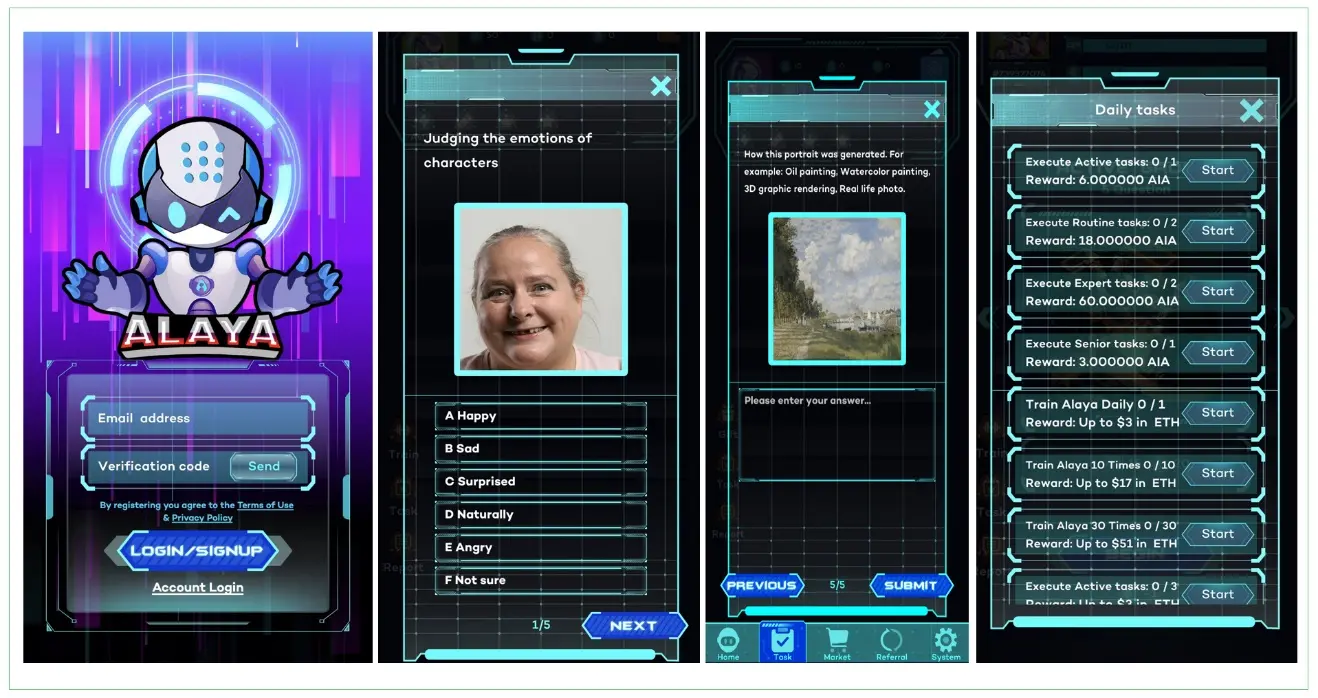

To lower the barriers to user participation, Alaya AI has designed a gamified product that collects data through user responses within the product while using cryptographic algorithms to ensure user privacy is not compromised.

For AI, By AI. Similar to the concept of reinforcement learning, Alaya AI's product integrates AI to help assess the quality of data, determine the accuracy and contribution of user judgments on AI data, and issue incentives based on this assessment. Additionally, Alaya AI will introduce a reputation mechanism and quality verification nodes for decentralized validation of annotation results. Through random sampling and cross-validation by quality verification nodes, errors or malicious annotations can be identified more efficiently, maintaining high-quality annotation results. In task allocation, Alaya AI employs an AI-assisted task allocation method that efficiently matches tasks with users. The more high-quality data users contribute, the higher their NFT level will be, and the difficulty of questions will also increase accordingly, ranging from ordinary general knowledge questions to specialized questions in specific fields (driving, gaming, film and television, etc.), and finally to advanced domain questions (medical, technology, algorithms, etc.).

Feasibility Analysis

Although traditional data annotation companies may exploit employees, this significantly aids their profitability. While Web3 data annotation can enhance human welfare in a more equitable manner, will this economically reduce platform revenues? In fact, Alaya AI has increased overall utility by enhancing diversity.

Traditional data annotation methods not only impose high workload requirements on individuals but also struggle to ensure sample quality. Due to meager annotation rewards, platforms mostly recruit users from developing regions, where education levels are generally low, leading to a lack of diversity in submitted samples. For high-level AI models requiring specialized knowledge, platforms find it challenging to recruit suitable annotators.

By integrating token/NFT rewards and referral bonuses, Alaya combines social and gaming elements into ordinary data annotation, effectively expanding community size and improving retention rates through daily check-ins. While controlling the reward limits for individual users through tasks, Alaya's viral referral system allows high-quality users' earnings to grow indefinitely as their social network expands.

Essentially, centralized data platforms in the Web2 era heavily rely on a few users to continuously provide large volumes of samples, whereas Alaya reduces the amount of data each user contributes while increasing the number of participating users. With lower individual workloads, the quality of contributed data will significantly improve, and the representativeness of the data will be greatly enhanced. With a larger number of users reached, the decentralized data annotation platform's collected data is more representative of the collective intelligence of humanity, free from sampling bias.

To prevent individual users from negatively impacting data quality due to unfamiliarity with the question domain or maliciously submitting incorrect answers, the Alaya AI platform employs a normal distribution model to validate data and automatically exclude or standardize extreme values. Furthermore, Alaya relies on self-developed optimization algorithms to cross-reference user answers and weights for validation, eliminating the need for manual checks and corrections, thereby further reducing data costs. The validity threshold for data will be dynamically adjusted based on the sample size of each task to avoid excessive corrections and minimize data distortion.

Technical Features

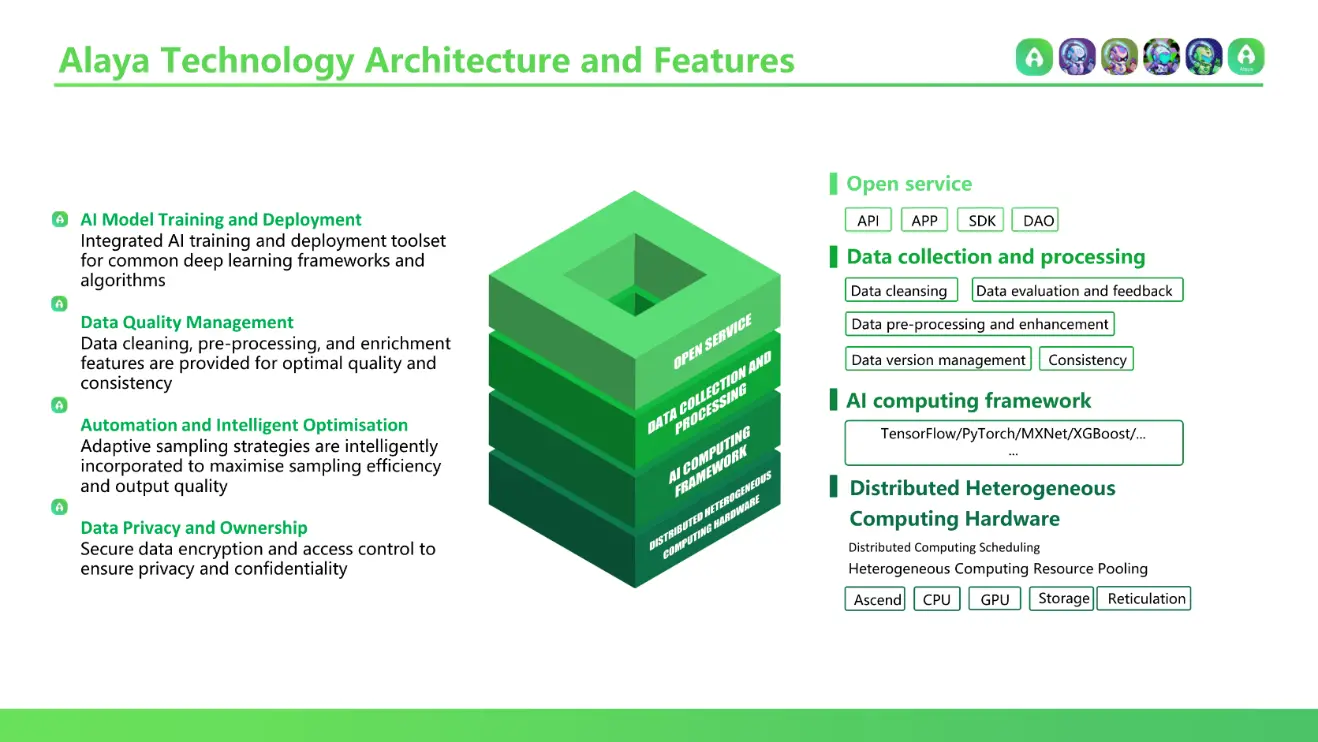

As an intermediary layer between data producers (individual users) and data consumers (AI models), Alaya AI collects user-annotated data, processes it, and delivers it for AI model use.

Alaya AI adopts an innovative Tiny Data model, optimizing and iterating on traditional big data to enhance deep learning training effectiveness from multiple aspects:

- Data Quality Optimization

The Tiny Data model focuses on high-quality small-scale datasets, improving data accuracy and consistency through data cleaning and annotation optimization. High-quality training data can effectively enhance the model's generalization ability and robustness, reducing the negative impact of noisy data on model performance. - Data Feature Concentration

The Tiny Data model employs feature engineering and data concentration techniques to extract key features from data, removing redundant and irrelevant information. The concentrated dataset contains a higher density of effective information, accelerating the model's convergence speed while reducing computational resource consumption. - Sample Balancing Optimization

The performance of deep learning models is often affected by imbalanced data distribution. The Tiny Data model employs intelligent data sampling strategies to balance samples from different categories, ensuring sufficient training data for each category and improving the model's classification accuracy. - Active Learning Strategy

The Tiny Data model introduces active learning strategies to dynamically adjust data selection and annotation processes based on model feedback. Active learning can prioritize annotating samples that have the greatest impact on model performance, avoiding inefficient repetitive labor and improving data utilization efficiency. - Incremental Learning Mechanism

The Tiny Data model supports incremental learning, allowing new data to be continuously added for training based on the existing model, achieving iterative optimization of model performance. Incremental learning enables the model to continuously learn and evolve, adapting to the ever-changing demands of application scenarios. - Transfer Learning Capability

The Tiny Data model possesses transfer learning capabilities, allowing pre-trained models to be applied to similar new tasks, significantly reducing the data requirements and training time for new tasks. Through the transfer and reuse of knowledge, the Tiny Data model can achieve good training results in small sample scenarios.

Additionally, Alaya AI integrates AI training and deployment tools, supporting commonly used deep learning frameworks, enabling various AI models to be directly recognized and utilized, thus lowering the upstream model training costs. Furthermore, by utilizing cryptographic algorithms such as zero-knowledge proofs and access control technologies, Alaya AI fully protects user privacy throughout the process.

Ecosystem Development

Currently, Alaya AI supports the Arbitrum and opBnB mainnets, allows email registration, and the mobile app is already available on Google Play.

From the B-end perspective, Alaya AI has established stable collaborations with over ten AI technology companies, and the number of partnerships continues to rise. This enables Alaya to achieve stable cash flow monetization, allowing it to consistently provide cash and Token rewards to users.

From the C-end perspective, Alaya AI currently has over 400,000 registered users, more than 20,000 daily active users, and over 1,500 daily on-chain transactions. Additionally, Alaya has built a decentralized autonomous community that will democratically decide the direction of the product in an open and transparent manner.

In the future, Alaya AI is expected to further integrate with DePIN, embedded in integrated AI smart hardware products (e.g., Rabbit R1), to obtain data from users' daily interactions and utilize the idle computing power of devices. Furthermore, through collaboration with decentralized computing platforms (e.g., Akash, Golem), Alaya AI can establish a unified market for AI data and computing power, allowing AI developers to focus solely on algorithm optimization. In terms of data storage, Alaya AI can store completed annotated data using decentralized storage protocols such as IPFS and Arweave, and actively collaborate with decentralized AI model markets (e.g., Bittensor) to train decentralized models with decentralized data.

Token Incentives

Alaya AI's token system is mainly divided into two parts: one for user incentives and the other for ecosystem incentives.

The first part is the AIA token, which is Alaya's basic platform incentive token. Users can earn AIA token rewards by completing tasks, achieving milestones, and participating in other activities within the product. AIA tokens can also be used for upgrading user NFTs, participating in activities, and obtaining unique achievements, all of which can increase players' basic output within the product. AIA tokens have basic output and consumption scenarios, with both mutually reinforcing each other.

The second part is the AGT token, which is Alaya's governance token with a maximum issuance of 5 billion. AGT is used for ecosystem development, upgrading high-level NFTs, and participating in community governance. Users must hold AGT to participate in community governance, data review, and request issuance.

Alaya AI's dual-token model separates economic incentives from governance, avoiding significant fluctuations in governance tokens that could affect the stability of the system's economic incentives, thus providing the entire system with stronger scalability and promoting long-term healthy development.

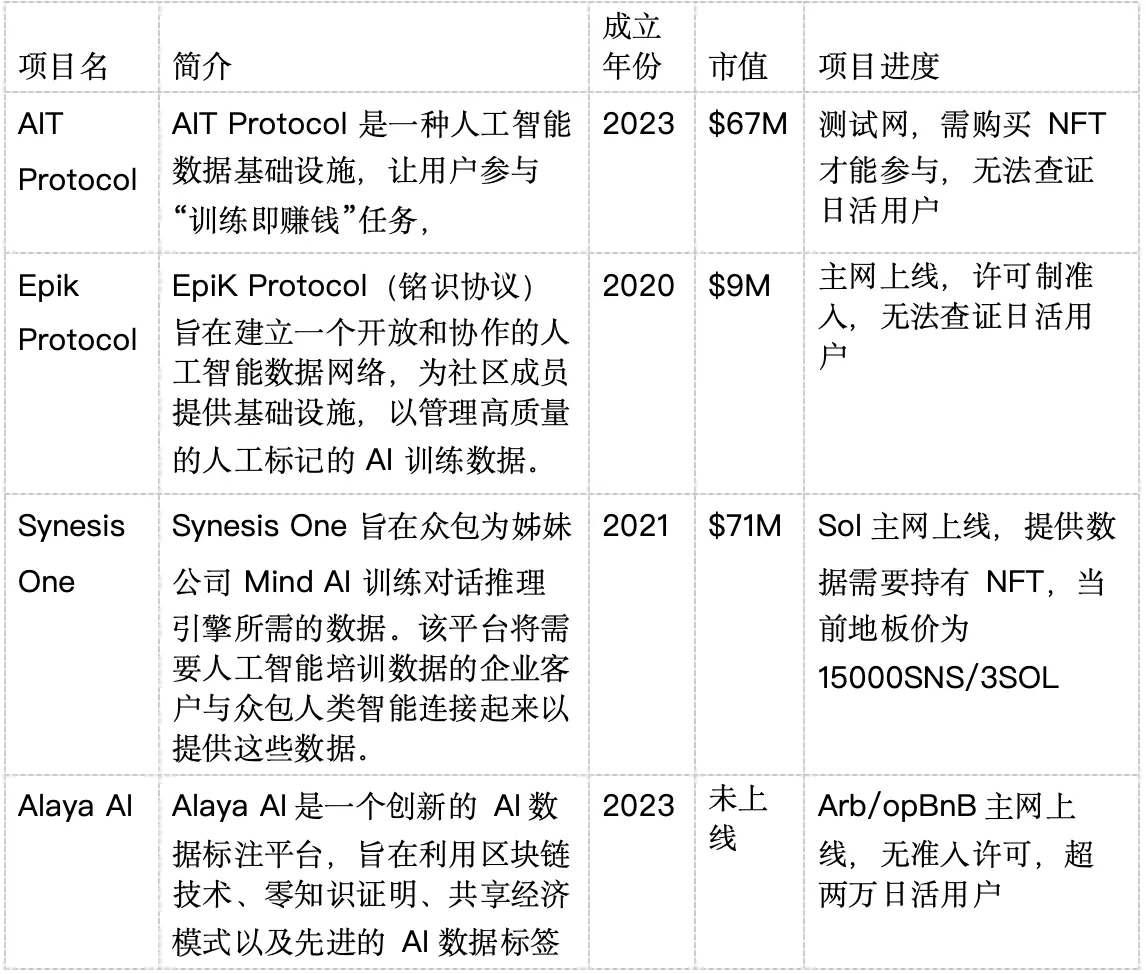

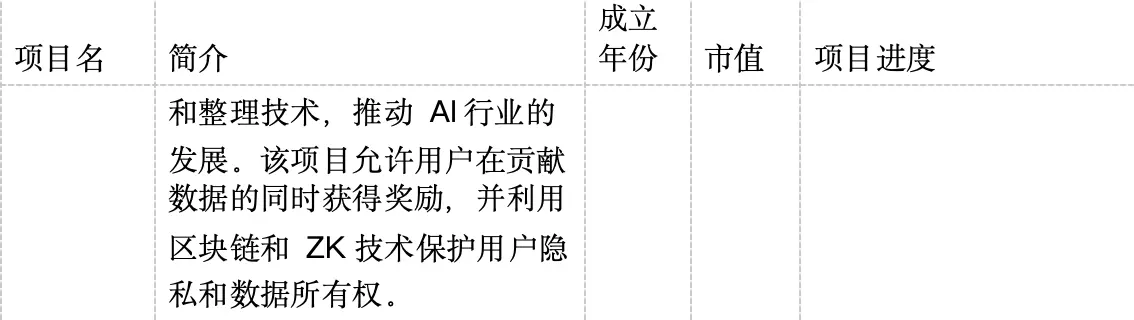

Competitive Analysis

The comparison list of existing decentralized data annotation projects is as follows:

From the competitive analysis, new projects tend to perform better in token performance compared to older projects. Additionally, projects backed by real user data significantly outperform those lacking users. As an emerging project with over 400,000 registered users, more than 20,000 daily active users, and over 1,500 daily on-chain transactions, Alaya AI is likely to receive better value support after its token issuance.

Reference

Website: https://www.aialaya.io/

Twitter: https://twitter.com/Alaya_AI

Telegram: https://t.me/Alaya_AI

Medium: https://medium.com/@alaya-ai

Deck: https://docsend.com/view/tvrctaq5hyen5max

Contact Person: ALAYA AI

Email: [email protected]

City: Auckland New Zealand