Solana Innovation: Solana's Concurrent Leader Mechanism, Solving MEV and Building a Global Price Discovery Engine

Author: Anatoly Yakovenko

Compiled by: Shenchao TechFlow

Overview

MEV is a fundamental issue in permissionless blockchains. Like most permissionless blockchains, Solana aims to minimize the MEV extracted by chain operators from users.

Solana's approach is to reduce MEV by maximizing competition among leaders (i.e., block producers). This means shortening slot times, reducing the number of consecutive slots assigned to a single leader, and increasing the number of concurrent leaders per slot.

In general, more leaders per second means that users have more options after waiting T seconds, allowing them to choose the best offer from the upcoming leaders. More leaders also mean that excellent leaders can offer block space at a lower cost, making it easier for users to transact only with good leaders and exclude transactions from bad leaders. The market should decide what is good and what is bad.

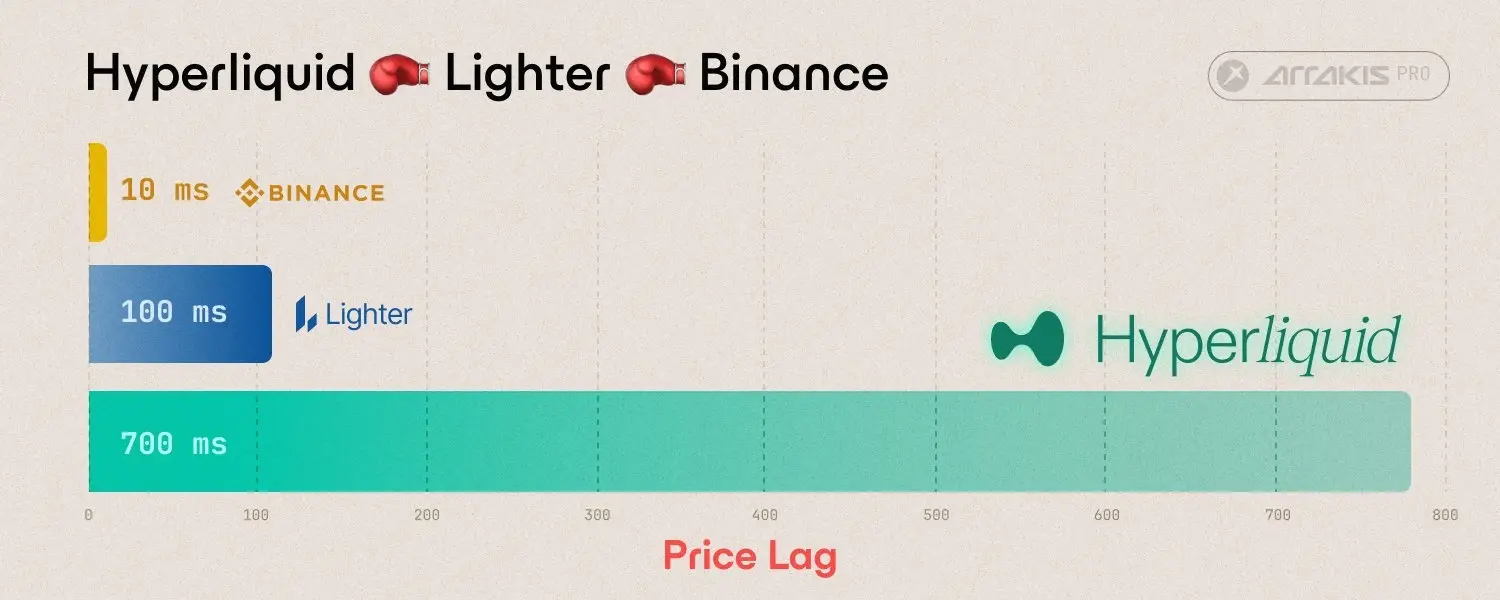

Solana's larger vision is to build a global permissionless price discovery engine that can compete with the best-performing centralized exchanges (CEXs).

If an event that impacts the market occurs in Singapore, the news still needs to be transmitted via fiber optics at the speed of light to a CEX in New York. By the time the news arrives in New York, the leaders in the Solana network should have already broadcasted this news in a block. Unless a physical internet partition occurs simultaneously, the state of Solana should already reflect this news by the time it reaches New York. Therefore, there should be no arbitrage opportunity between the CEX in New York and Solana.

To fully achieve this goal, Solana needs many concurrent leaders and highly optimistic confirmation guarantees.

Configuring Multiple Leaders

Just like the current leader schedule, the system will configure each slot with 2 leaders instead of 1. To distinguish between the two leaders, one channel is labeled A and the other B. A and B can rotate independently. The questions that need to be answered to implement this plan are:

What if blocks A and B arrive at different times or fail?

How to merge the transaction order from blocks A and B?

How to allocate block capacity between A and B?

Transmitting Concurrent Blocks

To understand the specific process, we need a quick overview of Turbine.

When leaders build blocks, they split them into shards. A batch of 32 shards is a Reed-Solomon encoded batch of 32 code shards. A batch of 64 shards is merkleized and signed as a root, linking it to the previous batch.

Each shard is sent through independent deterministic random paths. Each last batch's retransmitter signs the root.

From the receiver's perspective, each receiver needs to receive 32 shards from verified retransmitters. Any missing shards will be repaired randomly.

This number can be increased or decreased, with little impact on latency.

Assuming the retransmitter's shard path sampling is sufficiently random and weighted by stake, the stake required for a coordinated partitioned network will far exceed ε stakes, whether in arrival time or data. If a receiver detects that 32/64 (configurable) shards of each batch arrive within T time, it is likely that each node does as well. This is because 32 random nodes are large enough to make it unlikely that they all randomly end up in the same partition.

If a partition occurs, consensus needs to resolve it. This does not affect security but is relatively slow.

Multi-Block Production

If transmitting a single block, each receiver (including the next leader) will see each block's shard batches arrive. If a block is incomplete within T milliseconds, the current leader will skip that block and build a fork without it. If the leader is incorrect, all other nodes will vote on the block, and the leader's block will be skipped. Non-faulty leaders will immediately switch to the heaviest fork indicated by the votes.

In the case of multi-block transmission, each node will need to wait up to T milliseconds and then vote on the observed block partitions. For two concurrent leaders, the possible scenarios are: A, B, or A and B. Additional delays will only occur in the case of block delays. Under normal operation, all blocks should arrive simultaneously, allowing each validator to vote immediately after both arrive. Therefore, T may practically approach zero.

The attack that needs focused defense is whether a leader with a very small amount of staked tokens can reliably cause a network split by slightly delaying the transmission of a block at the slot boundary, forcing the network to spend a significant amount of time resolving the issue through the consensus mechanism. A portion of the network will vote for A, another portion will vote for B, and yet another portion will vote for both A and B simultaneously. All three split scenarios need to be resolved through the consensus mechanism.

Specifically, the goal of zero neighborhoods should be to ensure that nodes recover blocks simultaneously. If an attacker has a coordinated node in the zero neighborhood, they can normally transmit 31/64 shards and allow the attacker to selectively transmit the last shard, attempting to create a partition. Honest nodes can detect which retransmitters are delayed and immediately push the missing shards to them after any single honest node recovers the block. Retransmitters can continue if they receive shards or recover them from anywhere. Therefore, blocks should be recovered by all nodes shortly after an honest node recovers them. Testing is needed to determine the waiting time, whether it is absolute or weighted by the arrival time of each shard, and whether to use stake node reputation.

The probability of collaborative leaders and retransmitters in each block is approximately P leader stakes (64P retransmitter stakes). 1% of the stakes can attempt an attack in the ½ shard batch arranged by the attacker as a leader. Therefore, detection and mitigation need to be sufficiently robust.

This attack has little impact on the next leader, as asynchronous execution allows unused capacity to roll over. Therefore, if the current leader forces the next leader to skip a slot, and the next leader has 4 consecutive slots, the unused capacity from the skipped slot can roll over, allowing the leader to re-include the transactions from the skipped slot.

Merging Concurrent Blocks

If users send the same transaction to leaders A and B simultaneously to increase the chances of being included or to rank first in the block, this will lead to resource waste. If this occurs, increasing the number of concurrent leaders will have very limited performance improvement, as they are just processing double the garbage transactions.

To avoid duplicate transactions, the top N of the fee payers will determine which leader channel the transaction is valid in. In this example, the highest position will choose A or B. Fee payers must be assigned to an exclusive channel so that the leaders can determine that the fee payer is valid and has not spent all their lamports (the smallest currency unit in the Solana blockchain) elsewhere.

This will force spammers to pay at least twice for logically identical transactions, but to increase the probability of being the first transaction, spammers may still send logically identical transactions.

To prevent this behavior, users can choose to include an additional 100% burn order fee beyond the leader's priority fee. The highest order fee is executed first. Otherwise, a first-in-first-out (FIFO) ordering is used. In the case of a tie, a deterministic random permutation is used to resolve the order. Thus, for spammers, increasing their order fee and executing first is more cost-effective than paying the inclusion fee twice.

To handle bundled and reordered transaction sequences, the system needs to support bundled transactions, which can add an order fee to cover the sorting cost of the entire transaction sequence. Fee payers are only valid in their designated channel, so bundling can only manipulate the sequence within their own channel.

Alternatively, order fees may not be necessary. If FIFO ordering is used, and spammers are always charged priority fees across all channels, it may only be necessary to allow the market to decide the cost of paying N leaders to improve inclusion chances versus paying the most likely recent leader to include the transaction first.

Managing Block Resources

In a blockchain network, when there are two concurrent leaders, the block capacity limits across the system need to be evenly distributed. Specifically, not only the total capacity but also each specific limit, such as write lock limits—no account can write more than 6 million compute units (CUs), while each leader can schedule a maximum of 24 million CUs of transactions. This way, even in the worst case, the merged block will not exceed the system's total capacity limit.

This mechanism may lead to fee fluctuations and underutilization of resources, as the scheduling priority fees will be determined by each leader's capacity, while each leader knows little about the scheduling status of other concurrent leaders.

To mitigate underutilization of resources and the resulting fee spikes, any unused block capacity should roll over to future blocks. That is, if the current merged block uses less than X on write locks, total bytes, or total compute units (CUs), then K*X should be added to the next block, where 0 < K < 1, until a certain maximum value. Asynchronous execution can lag behind the chain top by up to one epoch, so capacity rolling can be quite aggressive.

According to recent block data, most blocks are typically filled to 80%, while write lock limits are far below 50%. Generally, future blocks should always have some spare capacity. Since blocks may temporarily exceed capacity limits, execution must be asynchronous with the consensus process. For more details on asynchronous execution proposals, see the APE article.